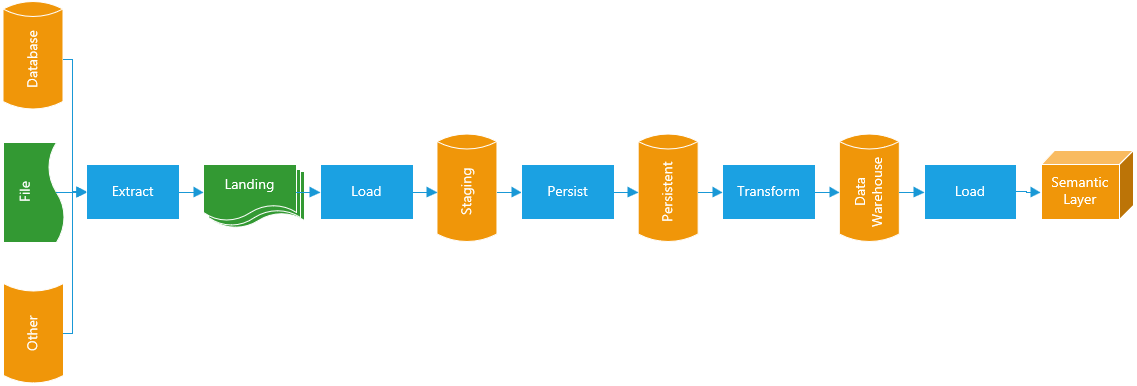

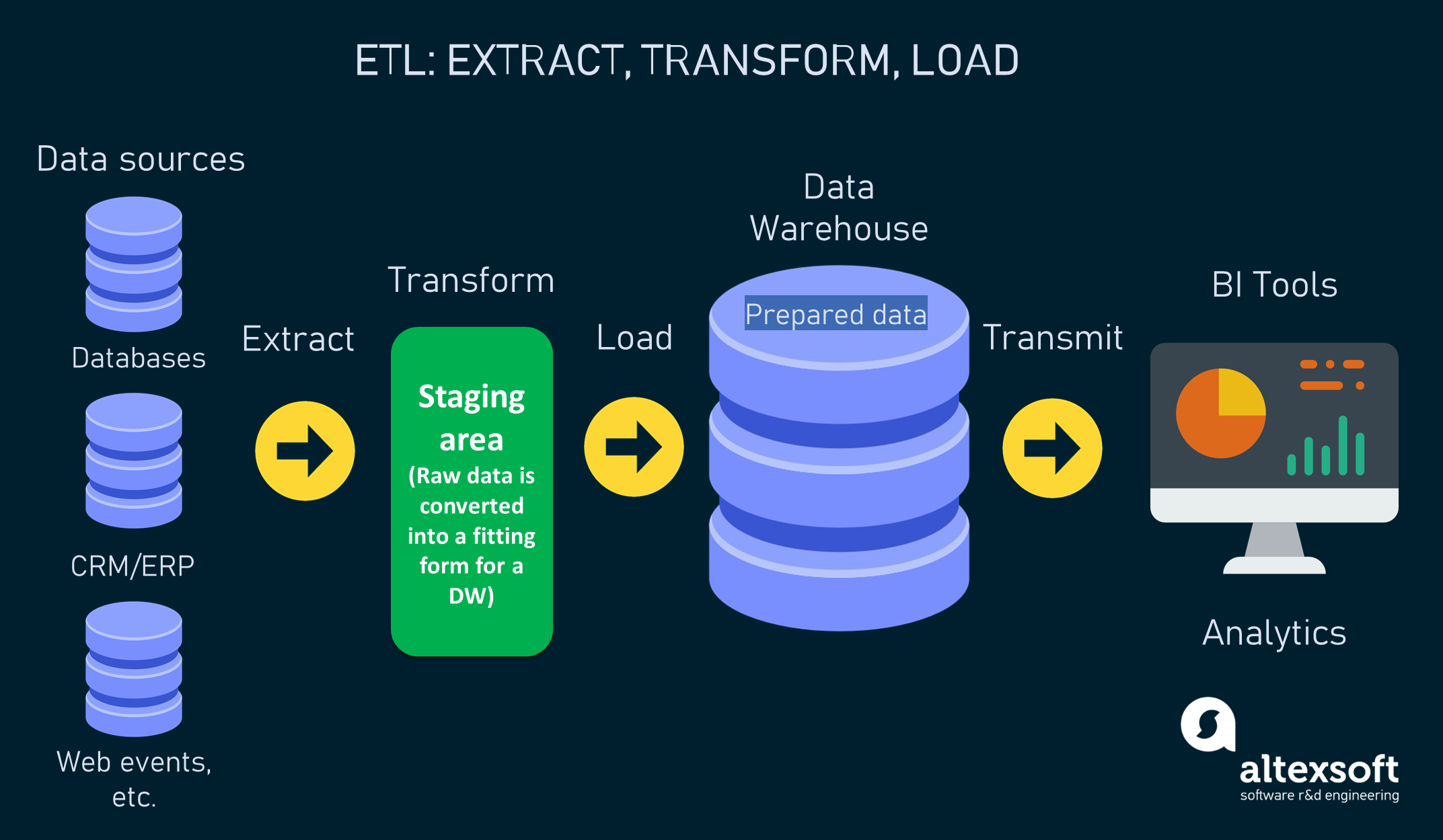

Given the data duplication, it became cumbersome to analyze the most popular items or purchase trends in that year. Over the year, it contained a long list of transactions with repeat entries for the same customer who purchased multiple items during the year. For example, in an ecommerce system, the transactional database stored the purchased item, customer details, and order details in one transaction. You can think of it as a row in a spreadsheet. Raw data was typically stored in transactional databases that supported many read and write requests but did not lend well to analytics. Early ETL tools attempted to convert data from transactional data formats to relational data formats for analysis. As a result, data engineers can spend more time innovating and less time managing tedious tasks like moving and formatting data.Įxtract, transform, and load (ETL) originated with the emergence of relational databases that stored data in the form of tables for analysis. ETL tools automate the data migration process, and you can set them up to integrate data changes periodically or even at runtime. Task automationĮTL automates repeatable data processing tasks for efficient analysis. You can integrate ETL tools with data quality tools to profile, audit, and clean data, ensuring that the data is trustworthy. Accurate data analysisĮTL gives more accurate data analysis to meet compliance and regulatory standards. This makes it easier to analyze, visualize, and make sense of large datasets. The data integration process improves the data quality and saves the time required to move, categorize, or standardize data. ETL combines databases and various forms of data into a single, unified view. Managing multiple datasets demands time and coordination and can result in inefficiencies and delays. Consolidated data viewĮTL provides a consolidated view of data for in-depth analysis and reporting. You can view older datasets alongside more recent information, which gives you a long-term view of data. An enterprise can combine legacy data with data from new platforms and applications. Historical contextĮTL gives deep historical context to the organization’s data. Hier gaat de terminologie over streaming data, data pipelines, data lakes en microservices.Extract, transform, and load (ETL) improves business intelligence and analytics by making the process more reliable, accurate, detailed, and efficient. Will you soon be working on this as part of our team? Apply for our Data Engineer job vacancy.ĭit kan op de traditionele manier met ETL (Extract-Transform-Load) tooling en data warehouses maar net zo goed op de nieuwe manier in de cloud. In the past, this function was also called ETL developer, BI developer, Data Specialist or Business Intelligence consultant – and even though it is not exactly the same, there is certainly overlap with these functions. Data Engineer, Business Intelligence consultant or ETL developer?ĭata Engineer is a rather new job title. Getting, keeping and continually developing a data science model in production requires this kind of specialist knowledge.

Someone in the role of Data Scientist works on the substantive, often mathematical side of developing a Data Science or machine learning model.Ī Data Engineer concentrates on the technology, design and architecture of data processing processes. Data Engineering is crucial for operationalising Data Science and advanced analytics. Here, the terminology is about streaming data, data pipelines, data lakes and microservices.ĭata Science and machine learning models only deliver value when they are in production.

This can be done in the traditional way with ETL (Extract-Transform-Load) tooling and data warehouses but equally well in a new way in the cloud.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed